Overview

Proof of Concept (PoC) project to assess FPGA’s role in enhancing real-time object detection compared to AI-based Python models. The main goal was to deploy the SSD model on an FPGA and contrast its performance with CPU execution.

- An SSD MobileNet model on an FPGA is implemented using Xilinx’s ZCU-104 development board.

- Utilizing the Zynq UltraScale+ ZCU104 MpSoC, feature extraction operations for the SSD MobileNet model are optimized using Vitis AI.

- FPGA’s tailored hardware enables rapid, accurate processing, perfect for real-time response applications.

Case

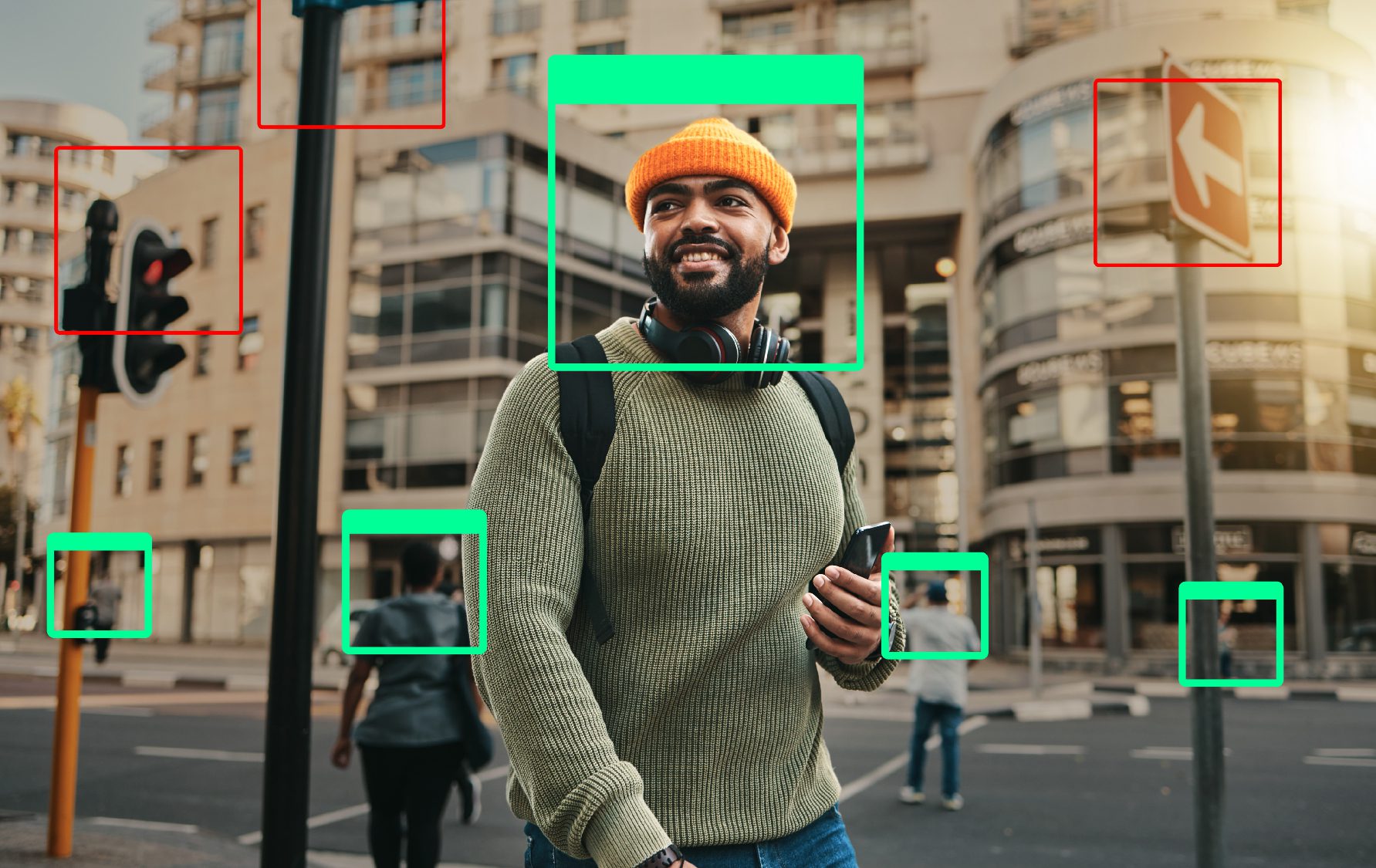

In today’s rapidly advancing world, remarkable technological advancements are happening every minute in every field. One area that has experienced a massive transformation is the convergence of Embedded systems, the Internet of Things (IoT), and cutting-edge technologies such as Artificial Intelligence (AI), Machine Learning (ML), and computer vision. These advancements have led to significant progress in the field of image detection, with object detection emerging as one of the most successful breakthroughs in computer vision. When it comes to implementing image detection, experts often debate the effectiveness of using AI-Python models versus Field Programmable Gate Arrays (FPGAs). Both AI-Python models and FPGAs possess unique strengths and can be highly effective in different scenarios. Recognizing the value of exploring different approaches, our embedded team embarked on a Proof of Concept (PoC) journey. The goal of this project was to explore how real-time object detection using FPGA utilized to enhance object detection by improving speed and efficiency in comparison to traditional methods that is using AI-based Python models. The primary objective of our PoC was to implement the Single Shot Detector (SSD) model on an FPGA and compare the results obtained with running the same model on a CPU. By focusing on the implementation of real-time object detection using FPGA, our team aimed to harness the power of FPGAs to enhance real-time vision systems. This would enable us to facilitate rapid and precise object detection in a wide range of applications.

Challenges

FPGAs offer the potential for high parallelism, low latency, and real-time processing, making them well-suited for computationally intensive tasks like object detection. By leveraging the capabilities of FPGAs, we aimed to overcome some of the limitations associated with AI-python models. Additionally, FPGAs can be customized and optimized for specific algorithms, resulting in improved efficiency and performance compared to general-purpose CPUs.Solution

Our implementation of real-time image processing with FPGA utilizes the SSD MobileNet model for its compact size, good speed, and acceptable accuracy. The ZCU-104 development board from Xilinx is chosen for its ample resources and built-in ports like Ethernet, DisplayPort, micro USB, and USB.Model Deployment:

We deployed the SSD model, an evolution of the VGG-16 model, by adding convolutional layers for classification. To optimize the model for efficient FPGA execution, we employed Vitis AI, a development platform provided by Xilinx, to quantize the original 32-bit model to an 8-bit model without significant accuracy compromise. To further improve accuracy, we employed pruning and optimization techniques. Pruning involved removing unnecessary connections between neurons, while optimization focused on fine-tuning training parameters. Once optimized, the model was compiled, generating an X model file that contained the instruction set for the Deep Learning Processing Unit (DPU) IP.

Hardware Design:

The Xilinx DPU IP was interfaced with the processing side of the ZCU-104 development board using Vivado IP integrator. The hardware design was validated, and the corresponding hardware file was created. Peta Linux, a Linux kernel, was developed for the Processing System (PS) side. During this process, the device tree was updated, and suitable drivers for peripherals were installed. The X model file was loaded onto the CPU, and a boot file for the board was created and flashed onto an SD card.

Model Evaluation:

After booting the ZCU-104 board using the SD card, we ran scripts to test the model’s performance. Performance results were obtained for a sample dataset with one thread and eight threads, yielding frame rates of 112 FPS and 290 FPS, respectively. Real-time object detection was performed using the model on a video stream from the camera, and the results were displayed on a monitor.

Our implementation of the SSD object detection model on an FPGA, specifically the ZCU-104 development board, resulted in a fast and efficient system with decent accuracy. By leveraging the parallel processing capabilities of the FPGA, we were able to overcome the computational complexity associated with object detection models, making them suitable for embedded systems. This achievement opens up possibilities for a wide range of applications, from waste segregation to interplanetary rovers. With further optimization and fine-tuning, the performance and accuracy of the model on FPGA can be enhanced even more.

The solutions include:

SSD MobileNet Neural Network Model Application running on Zynq ULtraScale+ ZCU104 MpSoC (FPGA + Processor core) Feature extraction operations performed on SSD MobileNet based architectures were quantified and optimized using Vitis AI. Deployed on a system running the Linux kernel, it exhibited superior performance compared to conventional object recognition implementations.

Impact

The real-time object detection based on FPGA technology has shown significant improvements in detecting objects in real-time. The FPGA’s specially designed hardware allows for fast and precise processing, which is ideal for applications that require immediate responses.

This enhanced system performance translates into improved accuracy and efficiency in various domains, such as:

— Garbage disposal

— Traffic analysis

— Public surveillance systems

— Self driving cars

— Interplanetary rovers, etc.

This improved system performance brings several impacts:

1. Enhanced accuracy and efficiency

2. Real-time capabilities

3. Scalability

While hardware limitations and costs exist, the system’s scalability and high performance make it a promising option for organizations seeking efficient object detection solutions. Further research and development in FPGA technology can help overcome the associated challenges and drive its wider adoption in various domains.